Hey Everyone,

I actually think EVI 2 was a more important announcement than it got credit for, overshadowed in part by other bigger announcements at the same time in the AI space.

I find Hume AI’s product very intriguing. Hume AI describes itself as an Empathic AI research lab building multimodal AI with emotional intelligence. Experience their API at demo.hume.ai.

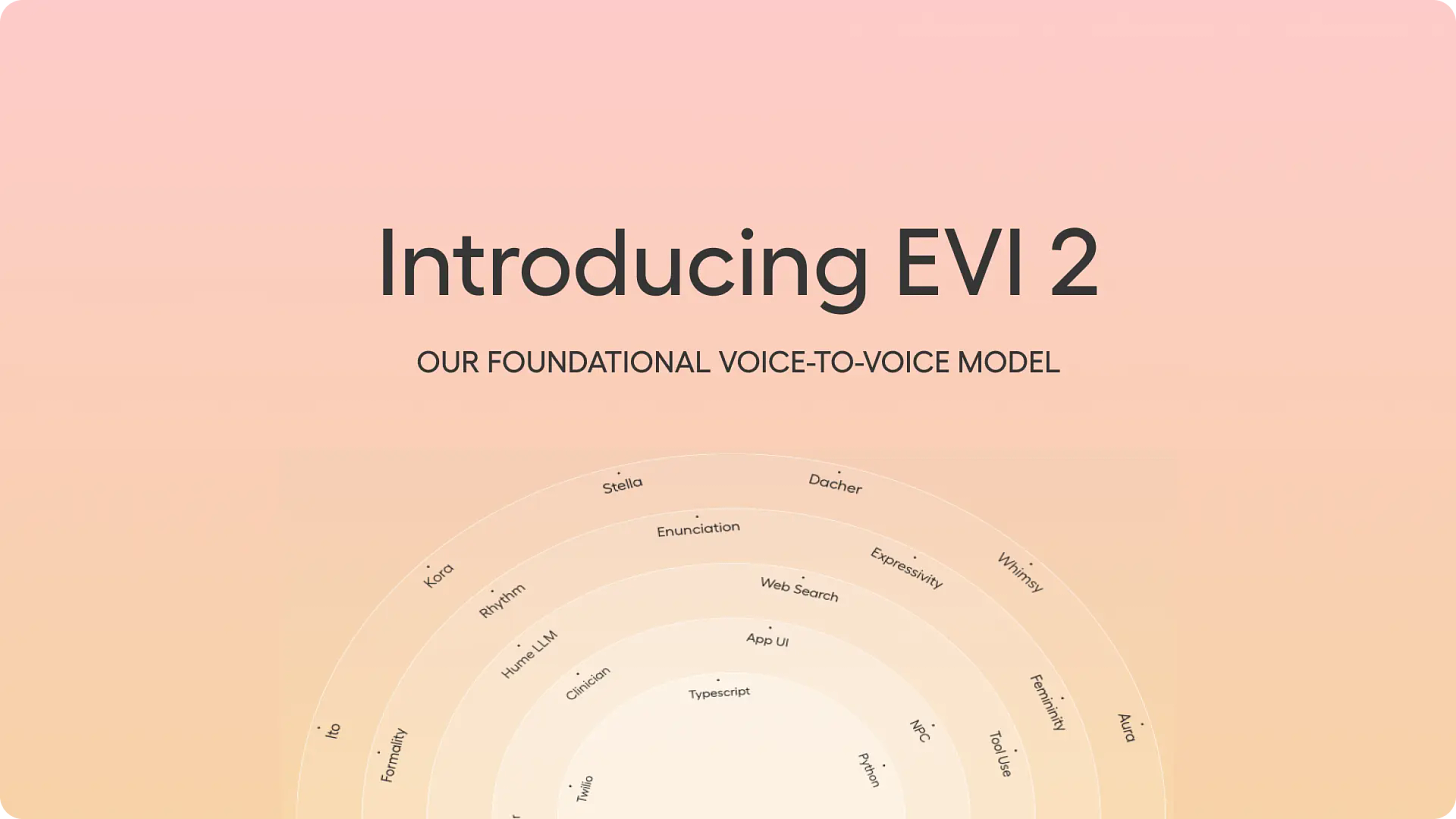

They recently introduced Empathic Voice Interface 2 (EVI 2), their new voice-to-voice foundation model. EVI 2 merges language and voice into a single model trained specifically for emotional intelligence.

Their CEO is Alan Cowen. They also launched a new iOS app that you can try here. Hume has introduced EVI 2, its new voice-language foundation model that merges speech and text processing into a single, powerful system.

Hume AI, a startup founded by a psychologist who specializes in measuring emotion, gives some top large language models a realistic human voice. - Wired

The idea of voice-AI being empathetic really resonates with me.

It is one of the first AI models with which you can have remarkably human-like voice conversations.

It can converse rapidly and fluently with users with subsecond response times, understand a user’s tone of voice, generate any tone of voice, and even respond to some more niche requests like changing its speaking rate or rapping.

It can emulate a wide range of personalities, accents, and speaking styles and possesses emergent multilingual capabilities.

At a higher level, EVI 2 excels at anticipating and adapting to your preferences, made possible by its special training for emotional intelligence.

It’s trained to maintain characters and personalities that are fun and interesting to interact with. Put together, EVI 2 is designed to emulate the ideal AI personality for each application it is built into and each user.

Featuring a bold new and improved AI voice named Kora 💁♀️ and integrating Claude 3.5 Sonnet into its responses, EVI is ready to listen, answer, and explore → https://apple.co/3zaFVkV

A lot of people have heard of ElevenLabs, but Hume AI is a bit less well-known. But the tech could become important.

The model can now engage in remarkably human-like conversations with subsecond latency, averaging around 500ms – a 40% reduction compared to EVI 1.

Launch in a Nutshell

Multimodal Integration: EVI 2 seamlessly integrates voice and language processing, allowing it to understand and generate both text and audio. This integration enhances its ability to engage in natural, human-like conversations.

Voice Quality and Speed: The model offers improved voice quality with more natural-sounding speech, better word emphasis, and higher expressiveness. It also features a 40% reduction in latency, providing faster response times averaging around 500 milliseconds.

Emotional Intelligence: EVI 2 is designed to understand the emotional context of user inputs and generate empathetic responses. This capability is reflected in both the content and tone of its responses, making interactions more engaging and emotionally attuned.

Customization Options: Developers can create custom voices using a new voice modulation method that adjusts parameters like gender, nasality, and pitch. This feature allows for tailored voices without relying on traditional voice cloning methods.

Cost-Effectiveness: Despite its advanced capabilities, EVI 2 is more cost-effective than EVI 1, with a 30% reduction in pricing to $0.0714 per minute.

Emerging Capabilities: The model is expected to support more languages and handle complex instructions as ongoing improvements are made. It is currently available in beta for developers to integrate into applications.

Their emphasis on empathy reminds me of Anthropic, so I could see Anthropic acquiring them one day.

🗣️ Try their interactive demo: https://app.hume.ai

📱 Try our iOS App: https://apps.apple.com/us/app/hume-your-personal-ai/

🔧 Access EVI 2 API: https://platform.hume.ai/

I’ve been waiting for Voice-AI to get better for many years, likely well over a decade. Generative AI has it seems enabled a lot of important advances.

Creating voices without voice cloning feels more aligned with a better internet.

Mental Health Apps

You can imagine this tech in a lot of real-world applications: EVI 2 aims to optimize AI for human well-being by adapting its personality to user preferences, making it suitable for various applications such as virtual assistants, customer service bots, and mental health apps.

Virtual Assistants

Customer Service bots

Mental Health apps

Healthcare administrative assistants

So the business use-cases could be fairly broad. The product is very world-centric and applicable in any number of service sectors, for example.

In late March, 2027 they completed a $50 million Series B. Technically it is a Google spin-off. Dr. Alan Cowen, a former Google researcher and scientist best known for pioneering semantic space theory – a computational approach to understanding emotional experience and expression which has revealed nuances of the voice, face, and gesture that are now understood to be central to human communication globally.

With OpenAI having its Advanced voice mode and Amazon upgrading Alexa, and Appel upgrading Siri, how voice-assistants respond to us in the coming years should be a lot better, smarter and more human-like. A compassionate AI is also an interesting dynamic considering the technological loneliness and unhappiness of young people who have grown up on their smartphones and in apps.

I also just like Hume AI’s pitch:

Created by leading emotion scientists and AI researchers at Hume AI, EVI is the first AI with emotional intelligence. It speaks like a human and understands you better than any chatbot 🗣️

I like the idea of an AI startup that is bringing something positive into the world but doesn’t necessarily take itself too seriously or promise some unrealistic end-game.

By processing both voice and language in the same model, EVI 2 has enhanced emotional intelligence capabilities.

Making AI, robots and digital assistants more human-like is a net gain for the entire world and it’s going to happen, one way or another.

AI voice products have the potential to revolutionize our interaction with technology. But also more importantly humanize many of the digital spaces we frequently turn to in the digital era and with a potential entry into an even more Metaverse era.

In theory, EVI2 emphasizes emotional intelligence, able to self-adjust based on user preferences and needs, providing a more interesting and enjoyable communication experience.

An AI with Emotional Intelligence?

What made our fascination in 2013 with the HER movie is how natural the AI felt there.

A standout feature of EVI 2 is its advanced emotional intelligence. By processing voice and language simultaneously, the model can better understand the emotional context of user inputs and generate empathic responses in both content and tone. This capability allows EVI 2 to adapt its personality and speaking style to suit different applications and user preferences.

The demos shows their model has a ways to go, but it’s certainly a step in the right direction. The tones and expressions are exaggerated but anything is better than the years we spent with dumb and bland voice assistants that never came to much.

It is available to talk to via their app and to build into applications via their API (in keeping their guidelines).

Try it here: demo.hume.ai

Hume AI have achieved a lot on a fairly medium sized budget.

An Era of Empathetic LLMs?

Before Inflection was acquired by Microsoft, they were on a good path with Pi. So imagine that but for Voice-interactions with Hume AI.

Their new voice Kora is also available to developers through the EVI API. Start building at http://beta.hume.ai

✨EVI has a number of unique empathic capabilities ✨

1. Responds with human-like tones of voice based on your expressions.

2. Reacts to your expressions with language that addresses your needs and maximizes satisfaction.

3. EVI knows when to speak, because it uses your tone of voice for state of the art end-of-turn detection.

4. Stops when interrupted, but can always pick up where it left off.

5. Learns to make you happy by applying your reactions to self-improve over time.

What are the limits of voice-AI technology in the Generative AI era? While EVI 2 is currently available in beta, Hume plans to continue improving the model's reliability, language support, and instruction-following capabilities in the coming weeks. The company is also working on a larger version of the model, EVI-2-large, which will be announced soon.

It makes sense that Claude would be attuned to Hume AI’s creations.

Read the story by Wired.

While ElevenLabs had gone full product, here we have a bit more deliberation. Importantly, it is designed to prevent voice cloning without modifications to its code, addressing unique risks associated with this capability.

Like ChatGPT or OpenAI’s Advanced Voice Mode, Hume is far more emotionally expressive than most conventional voice interfaces.

In an era of things like social commerce and more immersive and helpful personalized chatbots, I think voice and language will make all the difference. EVI 2 represents a significant step towards more natural, emotionally intelligent, and personalized AI interactions.

What makes Hume AI’s EVI Special?

While OpenAI’s advanced voice mode make us take these things for granted, we shouldn’t. Just a few years ago this would have been fairly impressive:

A universal voice interface, a single API for transcription, frontier LLMs, and text-to-speech.

End-of-turn detection, uses your tone of voice for state-of-the-art end-of-turn detection, eliminating awkward overlaps.

Interruptibility, stops speaking when interrupted and starts listening, just like a human.

Responds to expression, understands the natural ups and downs in pitch & tone used to convey meaning beyond words.

Expressive TTS, generates the right tone of voice to respond with natural, expressive speech.

Aligned with your application, learns from users' reactions to self-improve by optimizing for happiness and satisfaction.

Anyways this is something you need to experience to see the results instead of just read about. I can only imagine how our service sector and digital experiences could be improved by tech like this. In a generic sense, its potential applications span various industries, from customer service to entertainment, promising to reshape how we interact with AI in our daily lives.

While people fear Generative AI getting more persuasive (and possibly manipulative), I’m more interested in how AI becomes more empathetic. That’s why this AI startup’s launch recently really stood out to me.